Jason Voorhees

𝕸𝖊𝖗𝖈𝖊𝖓𝖆𝖗𝖞 𝕮𝖔𝖗𝖕 • 𝟐𝟎𝟐𝟒🥇

- Joined

- May 15, 2020

- Posts

- 85,587

- Reputation

- 254,843

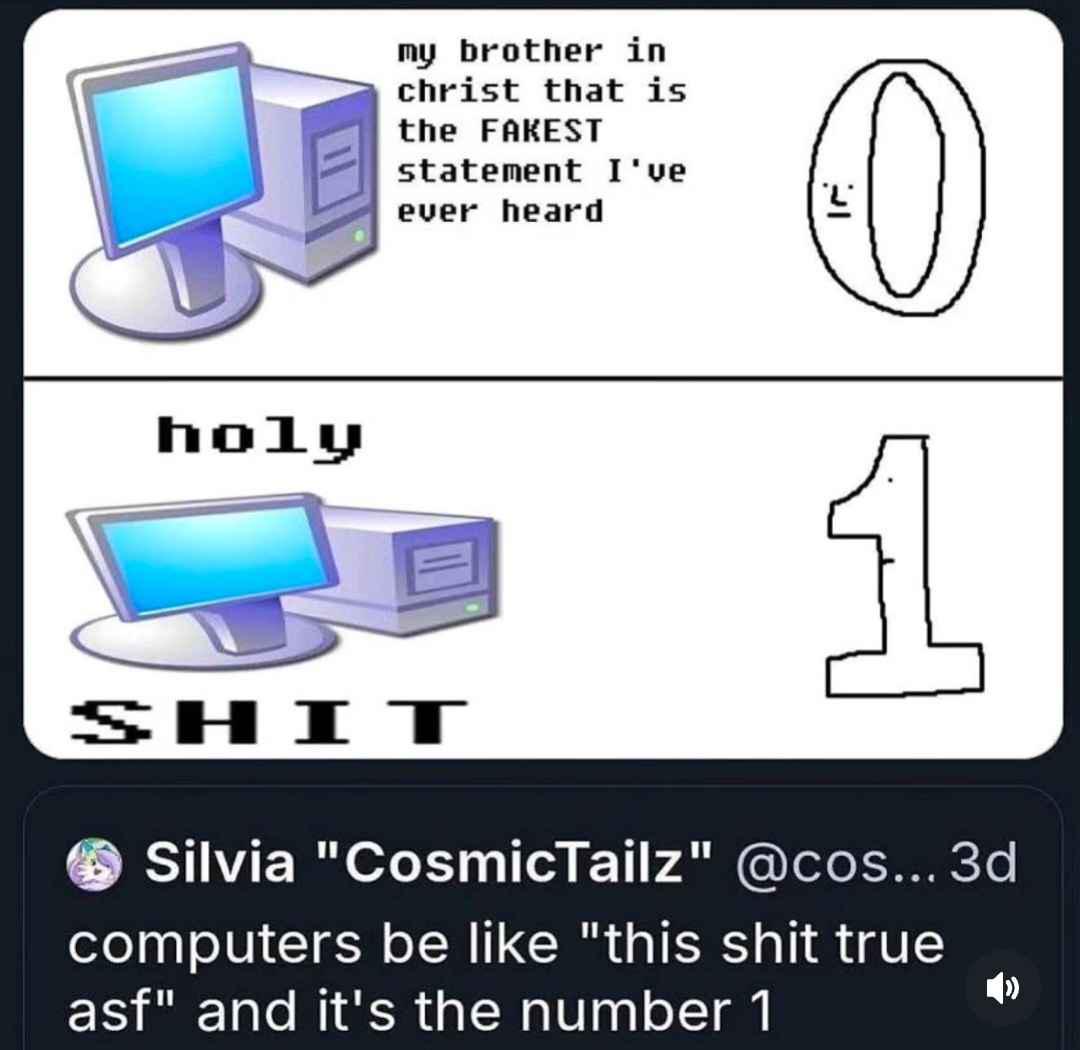

I'll keep it as simply as possible so anyone can understand. You see all computers store things Os and 1s. Everything is long sequences of Os and 1s but the problem is all systems since the dawn of computers have rounding errors. 0.1+0.2 =/= 0.3 because decimal numbers cannot reliably be represented in Os and 1s.It would be something like 0.30000000000000004 or something equally cursed.

It's a fundamental law of how binary floating point (IEEE 754) works. For 50+ years we have just ignored this because who tf cares but now with AI in the picture. You can't simply ignore it. Millions of matrix multiplications per second,millisecond inferences and perfect consistency across training runs. It means even than tiny errors get magnified into something catastrophic across 175 billion parameters.

This isn't a huge problem generally neural networks don't need mathematical perfection infact gradient descent actually loves a bit of noise and garbage for generalization but the problem is here we are dealing with algorithms. There's ofc workarounds like quantization, tensor cores in GPUs specifically designed to handle this with FP32 but there's none that specifically catering to our needs because we require a deterministic bit exact reproducibility.

It's a fundamental law of how binary floating point (IEEE 754) works. For 50+ years we have just ignored this because who tf cares but now with AI in the picture. You can't simply ignore it. Millions of matrix multiplications per second,millisecond inferences and perfect consistency across training runs. It means even than tiny errors get magnified into something catastrophic across 175 billion parameters.

This isn't a huge problem generally neural networks don't need mathematical perfection infact gradient descent actually loves a bit of noise and garbage for generalization but the problem is here we are dealing with algorithms. There's ofc workarounds like quantization, tensor cores in GPUs specifically designed to handle this with FP32 but there's none that specifically catering to our needs because we require a deterministic bit exact reproducibility.

Last edited:

can't even fathom dealing with that shit

can't even fathom dealing with that shit